Tracing the evolution of the GUI through the years

When people today sit down at their computers to use facilities management solutions – or any other type of software, for that matter – they take for granted that they can view their user experience on a screen, with the capability to point and click at the options of their choosing. In 2013, with all the advanced technology that’s at our fingertips, we forget that computing wasn’t always this easy.

Today’s Software as a Service solutions are remarkably easy to use for many reasons. Chief among them is their highly visual nature, and they have the growth of the graphical user interface to thank for that.

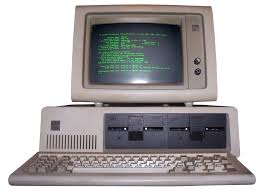

The GUI has come a long way. In the beginning of the Information Age, we used our computers in the most primitive way possible, by typing plain text into a command prompt. There was no mouse, no color – nothing visually engaging at all. Then the GUI came along and changed everything.

Starting in the seventies It wasn’t until the early 1970s when tech innovators began work on a groundbreaking new invention in the computing world – the mouse. Researchers in Palo Alto took the first step in developing the technology for people to control their operating systems by hand. It took a while, however, for these devices to be implemented into people’s computers and OSes.

It wasn’t until cheap personal computers began to be introduced – including the Apple I in 1976 and the Commodore Pet in 1977 – that graphical interfaces started to become accepted. The modern look and feel of a computer, with a screen that includes graphics and icons you can click on, began to take form.

Evolving in the eighties Before 1980, almost nobody bought the idea that individuals would want personal computers in their homes. PCs were a niche curiosity, not a real commodity, and few understood their value.

Eventually, that changed. With Apple and IBM leading the movement, operating systems and GUIs began to see some mainstream acceptance. IBM released a PC model in 1981, but it was too expensive for most people to own in their homes. When Apple came along with the first Macintosh computer in 1984, more people took notice and started buying. Eventually, these machines started to get more advanced – bigger screens, higher resolutions and a whole lot more going on.

Eventually, that changed. With Apple and IBM leading the movement, operating systems and GUIs began to see some mainstream acceptance. IBM released a PC model in 1981, but it was too expensive for most people to own in their homes. When Apple came along with the first Macintosh computer in 1984, more people took notice and started buying. Eventually, these machines started to get more advanced – bigger screens, higher resolutions and a whole lot more going on.

Novelty in the nineties Things really took off in the 1990s. With Macintosh OS and Microsoft Windows both taking off, we began to see a more diverse menu of options. People could now use their computer screens for handling a wide variety of tasks, ranging from word processing and spreadsheets to more complex applications, like managing photographs and other multimedia content.

Then along came the World Wide Web, which changed everything. Once web browsers became an omnipresent part of people’s computer use, people gained the ability to accomplish even more. Web apps, file sharing and more complicated graphical interfaces all became common the 1990s as the information superhighway rose to prominence.

Innovation in the aughts

Since 2000, we’ve seen even more growth in terms of what graphical interfaces can  do. We have countless web browsers, programs and online applications that we use to accomplish every minute task each day. We also have a more diverse array of screens. We’re not using just PCs with monitors anymore – we also have tablets, smartphones and more, all with different screens and capabilities for displaying graphics.

do. We have countless web browsers, programs and online applications that we use to accomplish every minute task each day. We also have a more diverse array of screens. We’re not using just PCs with monitors anymore – we also have tablets, smartphones and more, all with different screens and capabilities for displaying graphics.

In facilities management, we now have more ways than ever to use computers to accomplish countless tasks. The growth of the graphical user interface has played a major role in shaping our technology habits.